A company rushed into deploying AI analytics before fixing its data foundation. The agent could answer any data question in seconds, and what the team discovered first surprised everyone. Half their reports had been answering the wrong question for two years. The AI itself was not wrong. However, the data underneath it was ungoverned, inconsistently defined, and accessible to people who had no business shaping what leadership saw.

This pattern is showing up across organizations right now. The rush toward deploying AI analytics is accelerating, but teams are reversing the sequence. Instead of building the foundation first, they roll out AI on top of data environments that were never designed to support it. As a result, the output is faster answers to the wrong questions.

Three mistakes show up consistently before things go wrong. Here is what they are and how to avoid them.

Mistake 1: Deploying AI Analytics Before Defining Who Owns the Data

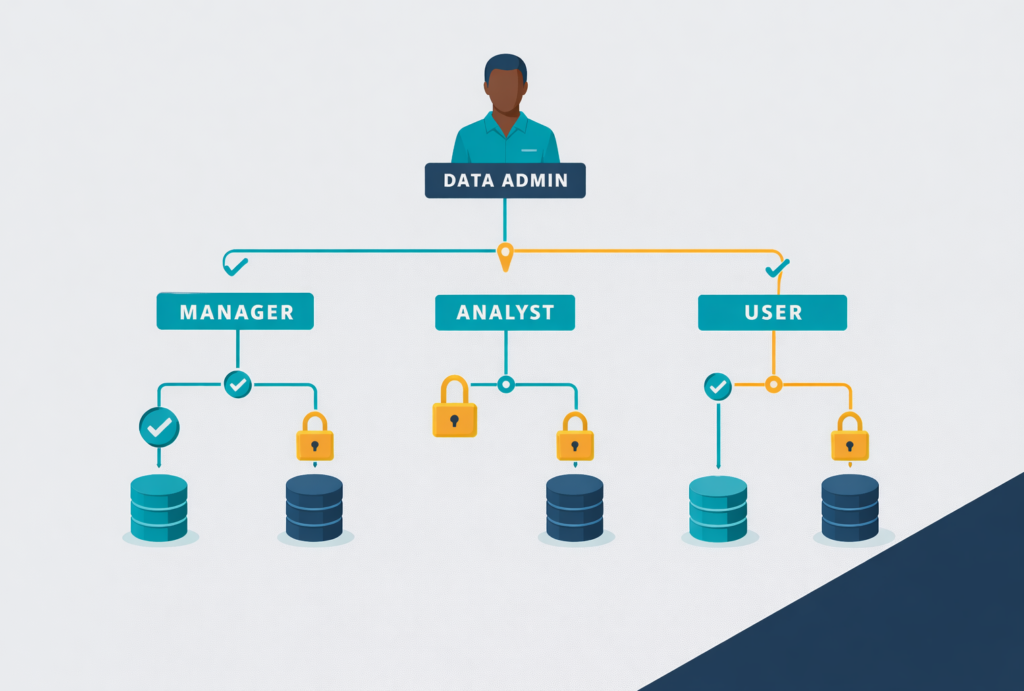

In most organizations, data access expands quietly over time. A team needs a report, someone adds a person to a database, and nobody reviews that permission again. Within a few years, sensitive information moves across teams without clear boundaries, and nobody is fully sure what anyone can see.

This is not just a security problem. It is, more importantly, a data quality problem. When too many people touch the data, modify it, copy it, or build reports from it without governance, the foundation becomes unreliable. Different teams calculate the same metric differently, so leadership ends up seeing three versions of the same number and trusts none of them.

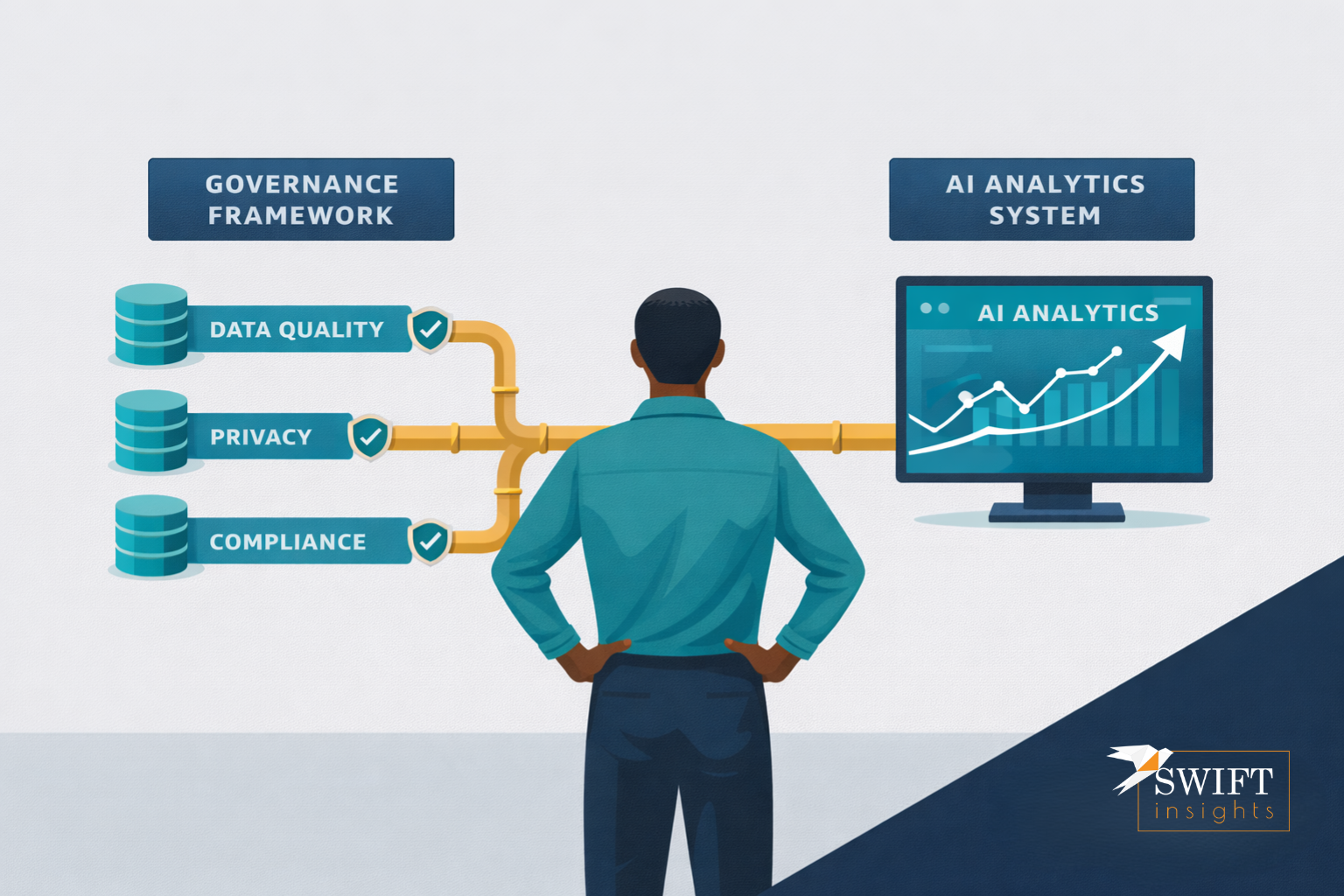

Gartner predicts that organizations will abandon 60 percent of AI projects through 2026 that lack AI-ready data. In other words, the cost of skipping governance is not a future risk. It is already showing up in failed projects right now. Before deploying AI analytics, define ownership of every data asset your organization relies on. Restrict access based on need rather than habit, and put controls in place so the right people have the right data at the right time. That foundation is what makes AI results trustworthy rather than just fast. Read Gartner’s full research on AI-ready data

Mistake 2: Assuming Deploying AI Analytics Reduces the Need for Human Judgment

The promise of autonomous BI agents is straightforward. An analyst asks a question in plain language, the system pulls the data, runs the analysis, and returns an answer in seconds without SQL queries or a backlog to wait through.

The problem, however, is that this only works reliably when the data underneath is governed and humans have set clear rules on top of it. An agent that queries anything, defined by nobody and owned by everyone, returns confident answers built on unstable ground. Because the answer arrives fast and looks clean, the error is actually harder to catch than a broken spreadsheet.

McKinsey research shows that high-performing organizations define processes for determining how and when model outputs need human validation. Moreover, these organizations significantly outperform those that do not. Deploying AI analytics does not remove the need for human judgment. Instead, it changes where teams apply that judgment. The best organizations are therefore not replacing analysts. They are repositioning them as the people who build guardrails, validate outputs, and codify the business rules that make an agent trustworthy in production. The query is automatable, but the judgment behind it is not, and that distinction matters more than most organizations realize before they start. See McKinsey’s State of AI 2025 for the full findings

Mistake 3: Treating Governance as Something to Fix After the AI Is Already Running

The most expensive version of this mistake looks like this. Leadership approves a budget for AI analytics. The team deploys the tool and the first set of results lands in a board meeting. Someone asks where the number came from, and nobody can answer cleanly. The audit trail does not exist, the team never documented the metric definition, and six teams touched the data with six different definitions of the same field.

Organizations cannot retrofit governance onto a system they built without it. As a result, the teams that try to add governance after deployment spend far more time, money, and credibility than those that built it in from the start.

The practical path is therefore sequential. Define ownership before deploying agents. Restrict access before scaling automation, and build the audit trail before the regulator asks for it. Ultimately, governance is not a constraint on deploying AI analytics. It is the infrastructure that makes AI analytics worth deploying at all.

Swift Insights works with organizations at exactly this intersection. Our team builds the governance frameworks and data pipelines that make executive dashboards reliable and AI analytics trustworthy in production. Explore our data governance services or learn more about Analytics as a Service.

The Analyst Role Is Not Disappearing. It Is Becoming More Important.

There is a version of the AI analytics story that treats the analyst as the problem to replace. That version, however, fundamentally misunderstands what analysts actually do.

Teams are automating the technical work of writing queries, pulling data, and building reports. That part is real. Nevertheless, great analysts were never valuable because of their SQL. Their value came from understanding what question the business was actually asking, knowing which metric definition matched the decision on the table, and catching the edge case the model did not know to look for.

As AI takes on more of the execution layer, the human role consequently shifts to the judgment layer. The analyst who sets guardrails, validates logic, and codifies business rules becomes one of the most valuable people in the room. The shift is therefore not from analysts to AI. It is from analysts as query runners to analysts as governance architects. Organizations that understand this distinction will get real lasting value from deploying AI analytics, while those that do not will get fast answers they cannot trust. See how Swift Insights supports this shift through data engineering.

What to Sit With

If your team deployed an AI analytics tool on your data environment today, how confident are you that the answers it returns reflect the business reality your leadership team needs to act on?