As 2025 comes to a close, many leaders are taking a hard look at the state of their data programs. On the surface, the year appeared productive. Dashboards went live, analytics teams grew, AI initiatives showed early promise, and new tools were introduced across the organization. Yet despite all of this activity, a quieter reality emerged during critical moments: decision confidence did not improve at the same pace as data output.

That gap became one of the defining patterns of the year. Organizations invested heavily in analytics and artificial intelligence, but when pressure increased, leaders still struggled to move quickly and decisively. The issue was not a lack of data. It was something more structural.

The problem was not technology

Throughout 2025, most data programs were evaluated through a technical lens. Leaders asked whether dashboards were adopted, whether models performed as expected, and whether reports refreshed on time. These questions were reasonable, but they focused on execution rather than impact.

In practice, the technology usually worked. Dashboards rendered correctly, pipelines stayed online, and models produced outputs. However, none of that guaranteed clarity when decisions became difficult. The real problem was not technical failure. It was that data initiatives were designed to deliver information, not to support ownership over decisions.

As a result, organizations generated more analytics than ever before, while decision-making friction remained largely unchanged.

Delivery quietly replaced ownership

One of the most common patterns in 2025 was the gradual shift from ownership to delivery. Dashboards were handed off to business teams, insights were shared broadly, and analytics outputs circulated widely. On paper, this looked like progress.

The challenge appeared when outcomes were questioned. At that point, it was often unclear who owned the definitions behind the metrics, who stood behind the numbers under scrutiny, or who was accountable when the data conflicted with operational reality.

Without explicit ownership, analytics became informative rather than decisive. This dynamic helps explain why so many analytics teams remained reactive throughout the year, responding to requests instead of shaping decisions. The same pattern is explored in Why Most Analytics Teams Are Stuck in Reactive Mode, which reflects a broader structural issue rather than a capability gap.

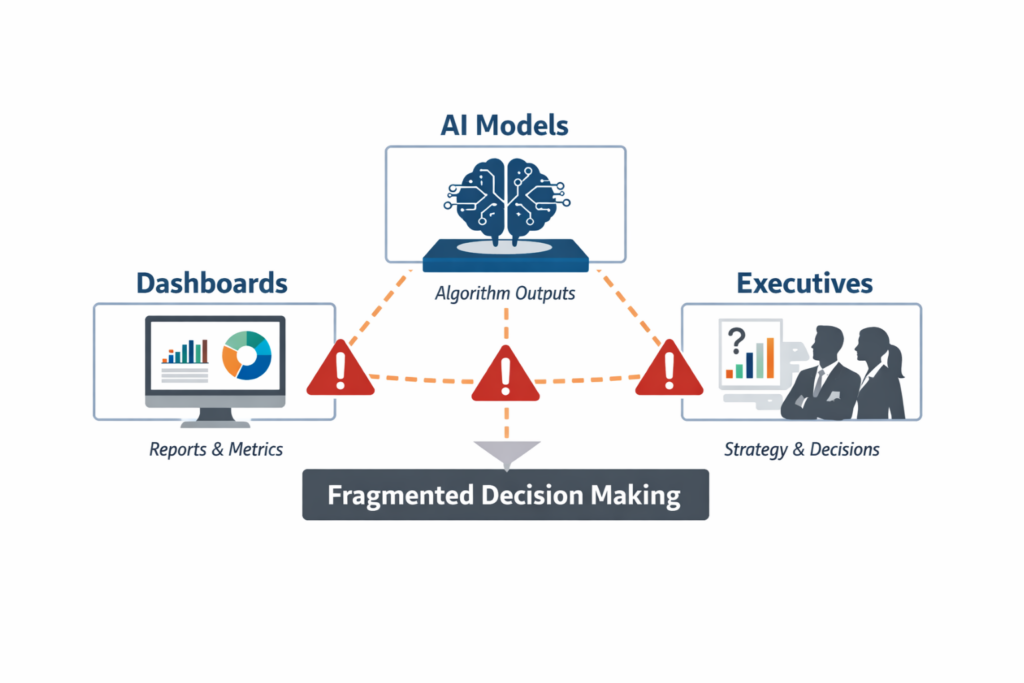

Dashboards, AI, and analytics struggled for the same reason

Another misconception in 2025 was treating dashboard adoption, AI performance, and analytics maturity as separate challenges. In reality, they struggled in remarkably similar ways.

Dashboards failed to hold up under executive pressure. AI programs delivered early value but stalled as they scaled. Analytics teams stayed busy but under-leveraged. These outcomes were not isolated. They shared a common root cause.

Decisions were never clearly designed into the system.

Dashboards were optimized for reporting activity rather than supporting judgment. AI models were trained to surface patterns but not to resolve ambiguity. Analytics teams were measured on output instead of decision impact. When governance, definitions, and accountability were weak, every layer of the data stack inherited the same limitations.

This is why many organizations eventually realized that the real return on AI depended far more on data quality and structure than on model sophistication. That shift in thinking is examined in Why the Real ROI of AI Comes From Data Quality, Not Models.

Governance was treated as overhead instead of infrastructure

Governance also played a central role in what went wrong during 2025. In many organizations, it was deferred, minimized, or framed primarily as a compliance requirement. Definitions were allowed to drift, metrics slowly changed meaning, and teams optimized for different outcomes without realizing it.

These inconsistencies rarely caused problems during routine reporting. They surfaced when stakes increased and decisions carried real consequences. At that point, disagreements over definitions and assumptions undermined trust in the data itself.

Strong data programs treat governance as decision infrastructure, not as control. Shared definitions, clear escalation paths, and explicit decision rights are what allow analytics to scale with confidence. Where governance matured, analytics adoption held. Where it did not, credibility eroded.

Research from MIT Sloan Management Review reinforces this point, noting that decision-driven analytics only succeed when decision ownership and context are designed into the system. See Leading With Decision-Driven Data Analytics.

Internal teams were asked to carry too much alone

The year also exposed a structural strain on internal analytics and BI teams. Many were expected to maintain platforms, deliver dashboards, support executives, experiment with AI, and respond to ad hoc questions simultaneously. All of this happened while priorities, definitions, and expectations continued to shift.

Even strong teams struggled under these conditions, not because of skill gaps, but because they were positioned as service providers rather than partners in decision design. As complexity increased, more organizations recognized the value of external reinforcement, not as a replacement for internal teams, but as a way to stabilize execution and perspective. This realization is reflected in Why Your Internal BI Team Needs a Partner to Tackle Backlog.

The uncomfortable truth about 2025

Looking back, the most consistent mistake of 2025 was not underinvestment in data. It was a misunderstanding of what data programs are meant to support.

Most initiatives focused on delivering information. Very few were designed to support accountable decision making under uncertainty. Until analytics, dashboards, and AI are built around how leaders actually decide, this gap will persist.

This is not a tooling problem. It is a design problem.

What this sets up for 2026

The opportunity heading into 2026 is not to deploy more dashboards or launch additional pilots. It is to rethink how decisions are supported, governed, and owned.

Organizations that treat data as decision infrastructure will move faster and with greater confidence. Those that continue to optimize for delivery alone will keep asking why clarity remains elusive.

The pattern became visible in 2025. What changes next will determine whether it repeats.