Many data and AI programs show strong early momentum. Dashboards go live. Models surface useful signals. Leadership feels confident that progress is happening. Then adoption slows. Decision speed drops. Teams quietly fall back to spreadsheets, meetings, and intuition.

Most organizations no longer struggle to generate data insights.

They struggle to turn analytics, dashboards, and AI outputs into repeatable business decisions.

This is rarely a technology problem.

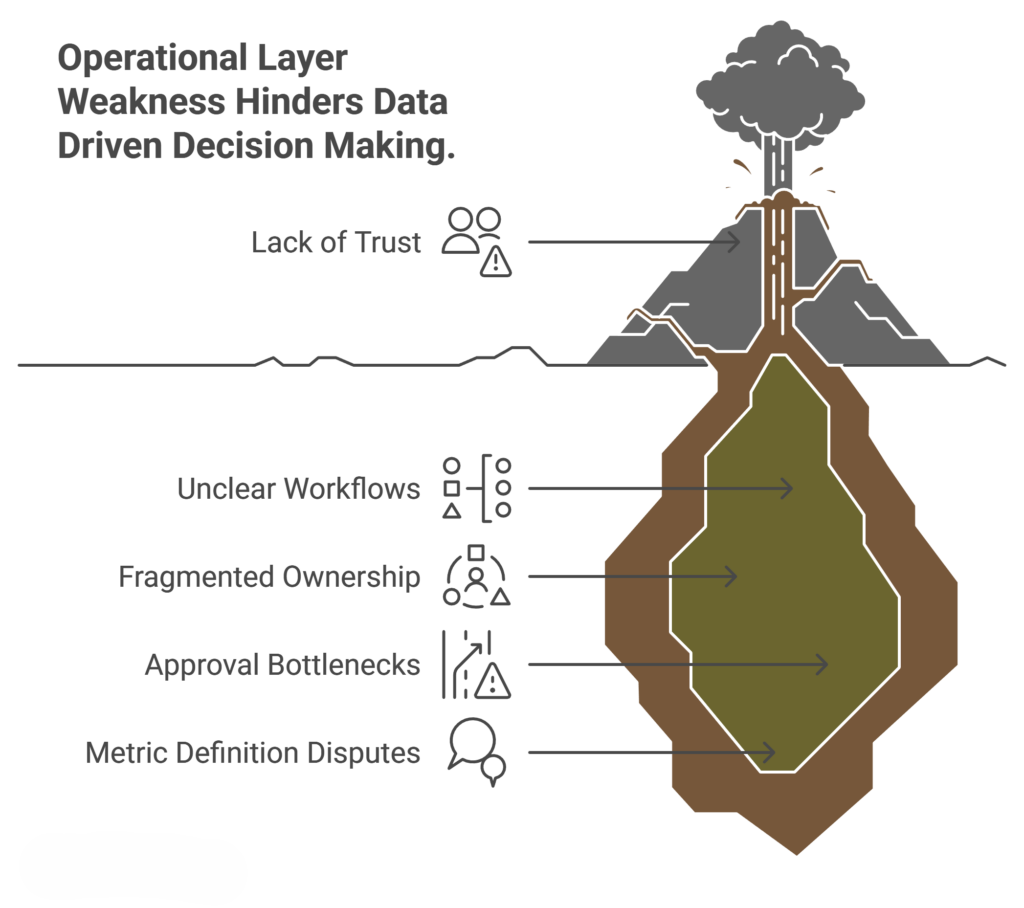

Where Data and AI Programs Break Down

What usually fails is the execution layer between insight and action. Ownership is unclear. Metrics mean different things to different teams. Manual approvals pile up. As a result, even strong analytics become hard to trust and harder to scale.

This pattern shows up across industries:

- Dashboards exist, but teams debate definitions instead of acting, a challenge we often see when organizations lack comprehensive data views.

- Pipelines run, but small changes create downstream surprises, especially when internal teams are overwhelmed by delivery backlogs and could benefit from external analytics partners.

- AI pilots show promise, yet leaders hesitate to rely on them without operational confidence, a common issue when data-driven decision-making is not fully embedded.

The Hidden Execution Gap

Adding more reports or platforms rarely solves this. In fact, it often increases friction. Real leverage comes from tightening execution.

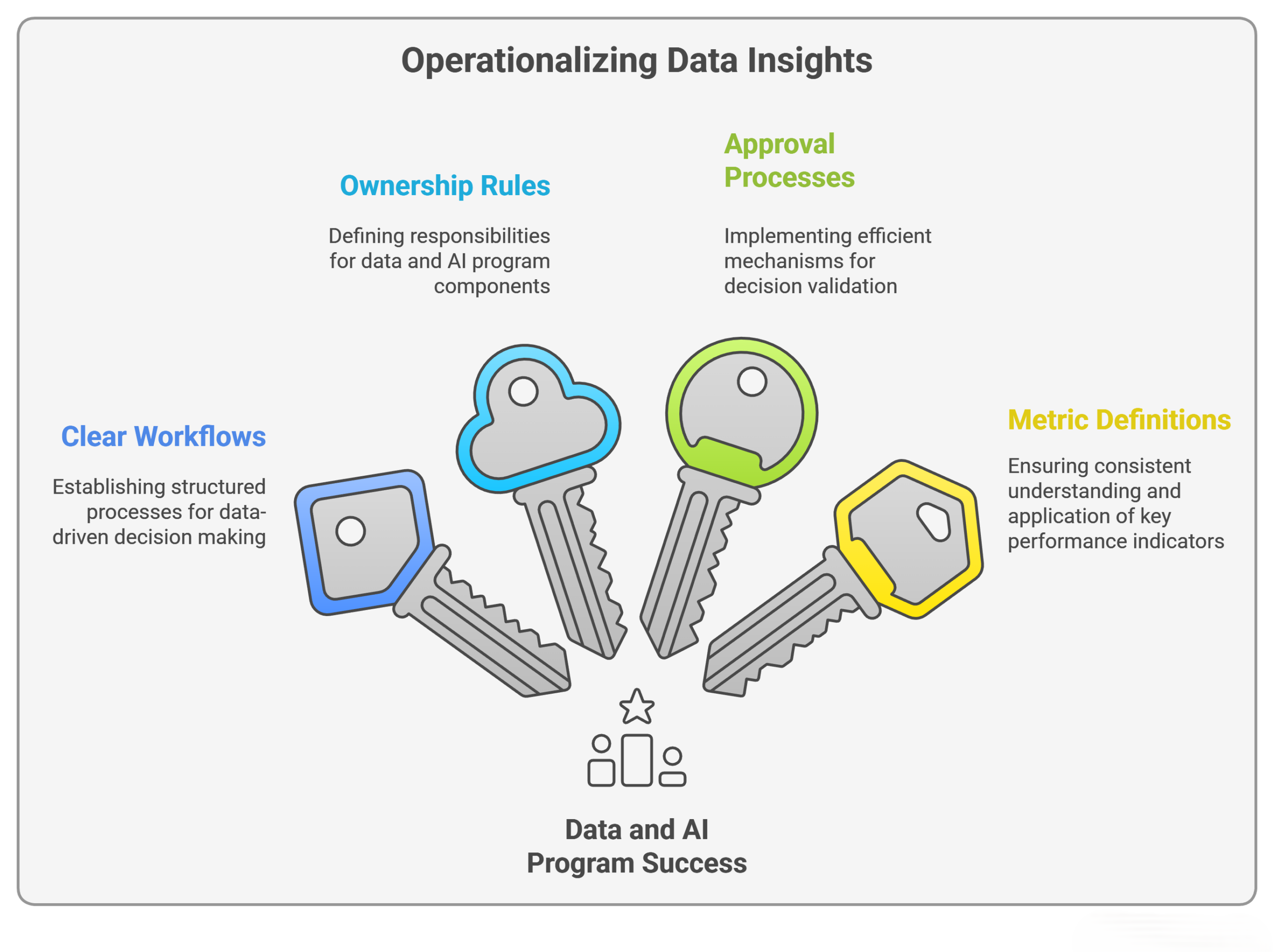

That means clarifying ownership, aligning metrics directly to decisions, and removing unnecessary review loops. It also means designing workflows where insights naturally trigger action, similar to how automated dashboards are redefining decision-making in high-performing organizations.

When teams fix this layer, adoption increases without force. Decision speed improves without more meetings. AI starts delivering value without constant explanation.

Turning Data and AI Programs Into Outcomes

At Swift Insights, most of our work happens in this gap. We focus less on producing more visuals and more on removing the operational friction that prevents analytics from driving outcomes. This approach reflects what we see in top-performing dashboard teams and in organizations that treat execution as a first-class design problem.

The difference between data that looks good and data that drives results is rarely technical.

It is operational.

If your data and AI program looks strong on paper but still struggles to move faster or act with confidence, that is usually where the real work begins.